The best AI product might not look like what we imagined - what Clawdbot (now Moltbot) proved

When people say “AI agents,” we often picture something that lives in the browser: opening tabs, filling forms, clicking buttons, finishing tasks end-to-end. That vision is real and powerful.

But the product that went viral wasn’t exactly that.

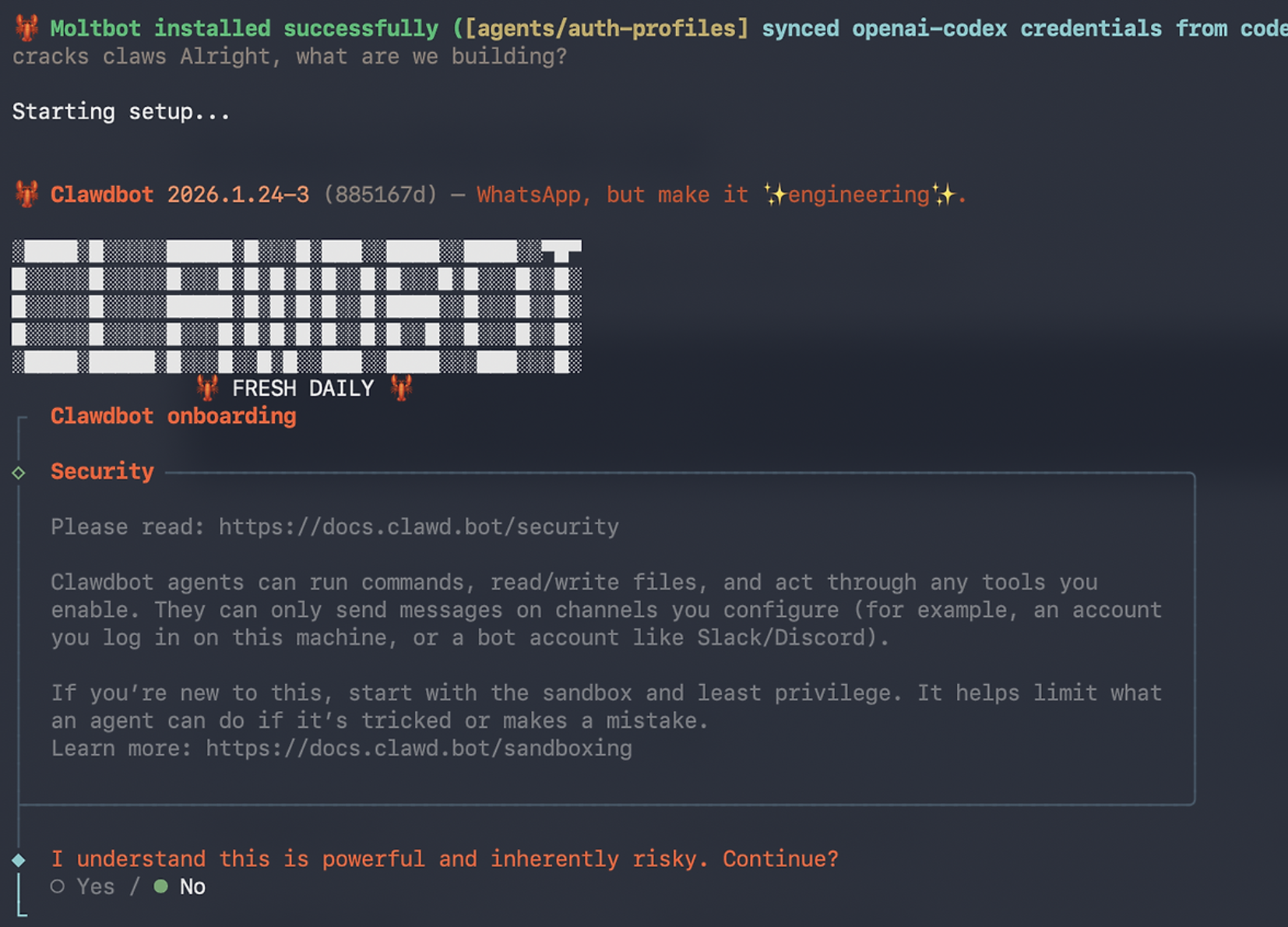

Clawdbot (now more commonly referred to as Moltbot, with the codebase continuing as OpenClaw) felt like a different category: a local-first personal agent that shows up in the chat apps you already use, executes tasks, and quietly becomes part of your daily workflow. And it scaled unbelievably fast — reaching six-figure GitHub stars at the time of writing.

1) The “default” AI product might not be a browser agent

A lot of modern agent demos follow the same storyline:

- open the browser

- navigate pages

- fill forms

- submit, done

It works. But what Moltbot highlighted is a different truth:

The best UI might be no new UI at all.

Instead of asking users to adopt a new app, it drops into WhatsApp/Telegram/Slack — the channels you already open every day — and works there. That one decision removes a huge amount of friction. AI can be brilliant, but the moment users have to “learn the product,” you lose people. Chat-as-interface avoids that almost entirely.

2) Why it felt like a product: local-first + real files for memory and preferences

What impressed me most is how clearly the project defined the product boundaries:

- the agent runs locally (your machine, your environment)

- settings, preferences, and memory live as actual folders and Markdown files

- you extend capabilities via skills, install/update them, and shape the agent over time

This is oddly convincing. Not “the AI magically remembers,” but “here’s where it lives; you can inspect it.” If something goes wrong, you can open the folder, edit the rules, delete the memory, or tighten permissions. In practice, it feels less like a chatbot and more like a local AI operating layer.

3) Why it grew so fast: prototyping speed became product advantage

Moltbot’s lesson is blunt:

Great AI products aren’t only about smarter models.

They come from teams that can prototype fast, ship fast, and iterate inside real user workflows.

Agent products especially demand this. They’re not just model demos — they require “reality layers”:

- channel integration (chat apps, notifications, permissions)

- execution environment (local, server, security)

- failure handling (retries, rollbacks, guardrails)

- state and memory (context, compaction, durable records)

Move slowly and you get a demo. Move fast and you get a product — even if the model isn’t perfect. Moltbot proved that in public.

4) AI agents are already going mainstream (and the efficiency is real)

“Agents will become mainstream” is no longer a prediction — it’s a pattern you can observe.

People want one thing:

- say what you want in natural language

- the system decomposes it

- executes steps

- returns results

When that loop works, the efficiency jump is not subtle. Repetitive work — summaries, reminders, lightweight automation, drafting messages/emails — becomes instantly cheaper.

But there’s an important tradeoff: once an agent gets execution privileges, security becomes a first-class concern. Powerful local + messaging + tool access also means higher risk. Guardrails like DM pairing, scoped permissions, and sensitive-data hygiene aren’t “nice-to-have features” — they determine whether the product is trustworthy.

Conclusion: the AI product we wanted might be “workflow-native,” not “UI-heavy”

Moltbot didn’t win by showing a flashy futuristic interface.

It won by:

- minimizing UI

- living inside existing chat workflows

- being local-first

- making memory/preferences legible and controllable

And the market responded.

Lately, I keep coming back to these points:

- The best AI product may not look like what we imagined.

- Finding that shape requires fast prototyping and real deployment.

- AI agents are already being normalized — and the efficiency is real.

- The next differentiation might be less about “the model” and more about “how fast you can productize and iterate.”